AI Frontier #4

Tracking the edge of machine intelligence

Welcome everyone to AI Frontier #4, where we watch the future of machine intelligence being built.

As I mentioned last week in the latest edition of Techno-Optimist, I’m formalizing the names for these area specific editions which I’ve already broken out from the main newsletter. They’re focused on the technological edges shaping the next century, with this one of course tracking the advances of AI.

There was some discussion on X about what to recommend to kids in middle/high school who are starting to think about future careers. Given the impact AI is expected to have on everything, what should they do? Here’s a few thoughts I had: consider jobs that are harder for robots to replace that combine field + intellectual work. E.g., Archaeology, some Geology, some Anthropology. Park warden / ranger. Marine Biologist, any sort of Biologist that does field work. Industries where people want the human touch, even if it’s not strictly necessary. Trades will likely be some of the longest to hold out, but I suspect kids in high school now won’t be able to get through a full career even in the trades before AI powered robots can do the jobs better in almost all cases. In general, encourage young people to pursue what they’re passionate about, because we just don’t know what the future holds, and what might turn out to be an open door later on. Read and experience widely to find out what they’re passionate about. Read widely in general. Use AI tools regularly, become familiar with using them at a high level, not just as a replacement for Google. And trust that God is still ultimately in control.

Buckle up, and hang on tight because things are moving fast.

“AI revolution will be 10x bigger and 10x faster than the Industrial Revolution”

—Demis Hassabis (Co-founder and CEO of Google DeepMind)

Model Updates & News

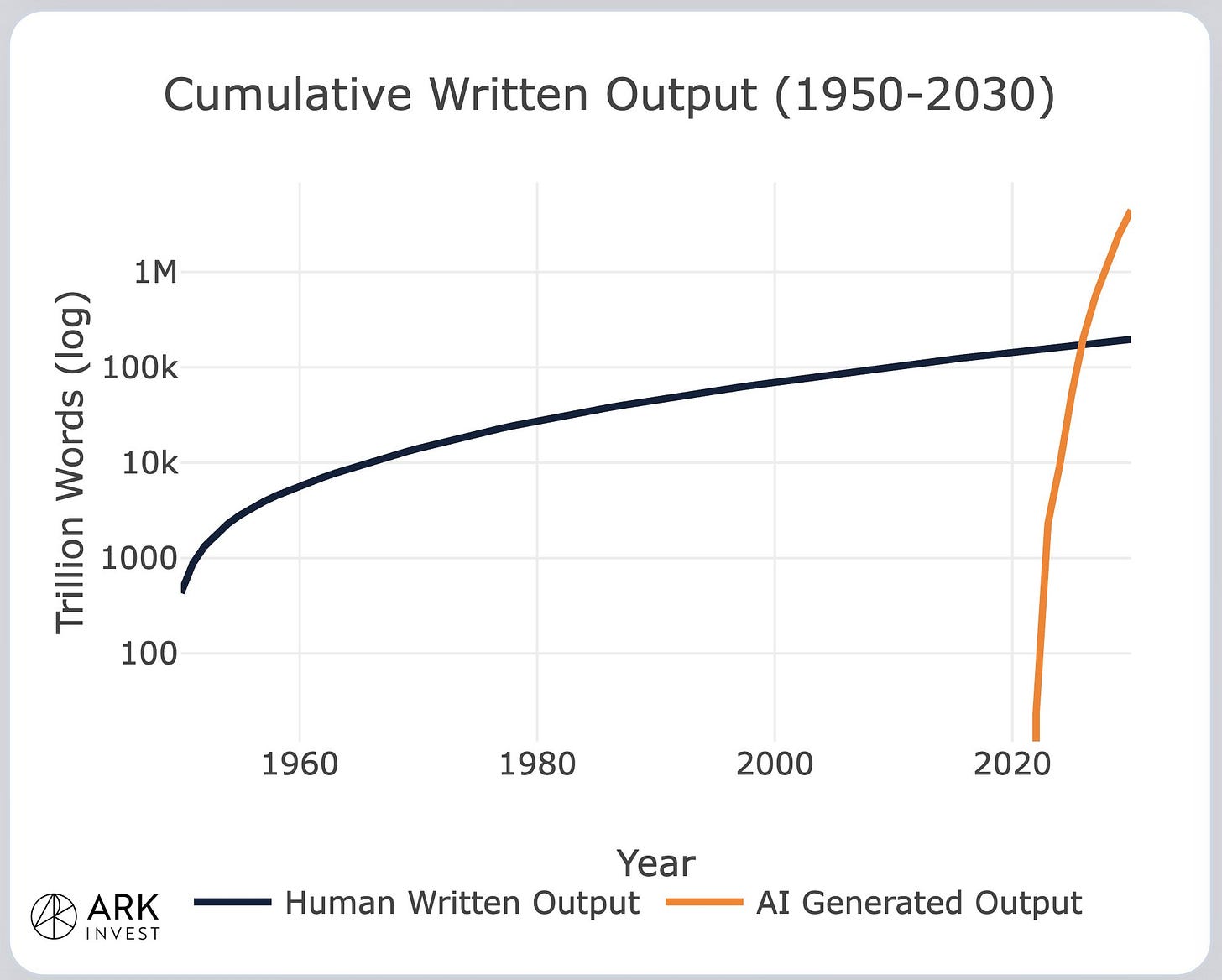

This year we’re going to hit an interesting milestone, where content written by AI will surpass the cumulative written output of humanity that we’ve produced over all of history. One can argue that the majority of AI writing is just slop. But I think a lot of human writing falls under that category too.

We may be within a year of the Singularity (it could also be later), which is another term for the Intelligence Explosion. It’s the point at which AI gets smarter than humans, and is able to design better and better versions of itself, leading to a rapid feedback loop of self-improvement, moving very quickly to superintelligence that far surpasses any human capability individually or collectively. What I’m seeing from multiple AI companies right now is that the point where their systems become capable of autonomous, recursive self improvement is almost here. Anthropic said that,

We believe it is plausible, as soon as early 2027, that our AI systems could fully automate, or otherwise dramatically accelerate, the work of large, top-tier teams of human researchers in domains where fast progress could cause threats to international security and/or rapid disruptions to the global balance of power, for example, energy, robotics, weapons development and AI itself.

Demis Hassabis of Google DeepMind now thinks that “In 2026, we’re at another threshold moment where AGI is on the horizon—maybe within the next 5 years.” Sam Altman of OpenAI says that “If we are right, by the end of 2028, more of the world’s intellectual capacity could reside inside of data centers than outside of them.” A now former co-founder of xAI, Jimmy Ba, just said that recursive self improvement loops are likely to go live in the next 12 months.

The timeframe on all this seems about right. Recursive self-improving algorithms possible by the end of this year. Maybe one more year (2027) for that part of the tech tree to mature. The key is making the process autonomous, instead of needing a human to push the “start” button, and to review each new model. Then superintelligence in 2028. Or it could still be a few more years, we just don’t know. The way things are moving, could be even sooner though. I suspect the last stretch will be extremely fast, going from “hey, this is like JARVIS,” to “wow, we don’t even understand this now” in a matter of weeks or even days. After that? I honestly don’t know. Hopefully it’s friendly.

Please pardon the French, but here’s more proof of how fast things are changing: I may have previously mentioned that the time AI agents could successfully work on a project had been doubling roughly every 7 months, and that it was expected to shorten to every 4 months. It just doubled in 2 weeks. The top graph was created by Tim Urban back in 2015. He commented that “The scary thing is my graph doesn’t start at early LLMs—it starts 4 billion years ago with the origin of life. What’s happening now is THAT big.”

I’m reassured somewhat by some comments from Elon Musk as to the stated goals of Grok. “Understanding the Universe is the xAI mission and the reason our AI is called Grok.” Also, “Only with extreme adherence to truth is it possible to Grok the Universe. Honestly, that’s so much better than woke AI, which to one degree or another is most of the other big American players.

This is rather beautiful, it’s a visualization of GPT-5.2 building a 3 million+ lines of code browser over the course of a week.

A rather interesting test to see whether an AI counts as AGI has been proposed by the CEO of Google DeepMind Technologies. Dubbed the “Einstein Test,” it would involve training a model “on all human knowledge but cut it off at 1911, then see if it can independently discover general relativity (as Einstein did by 1915); if yes, it’s AGI.” Sounds good to me, I’m genuinely curious if it could be done.

Here’s an article by Matt Shumer that really struck home. It’s a sobering read, though it’s not necessarily what one would call bad news, just very important. The Singularity is basically in its early stages now. Reading this will help you prepare. “I know the next two to five years are going to be disorienting in ways most people aren’t prepared for. This is already happening in my world. It’s coming to yours. I know the people who will come out of this best are the ones who start engaging now — not with fear, but with curiosity and a sense of urgency.”

Open AI has raised $110 billion in a funding round. That’s the sort of cash needed to play to win the AI race. GPT-5.2 rolled out on December 11th, then 5.3 Codex (specialized for coding) on February 5th, and GPT-5.4 on March 5th. The time between new updates is getting shorter in a hurry.

Anthropic debuted Claude Opus 4.6 (more intelligent), then Claude Sonnet 4.6 (faster, lower cost). They also just raised $30 billion at $380 billion valuation.

Grok 4.2 Beta (launched on Feb 17th) uses 4 agents that work together to answer your query. They collaborate to integrate inputs, debate each other, and double check their own thought processes before delivering a final answer. It’s like a conference of AIs, but within a single app. This is probably the way to go honestly. It’s similar to what Perplexity is doing with its AI Model Council that uses multiple different models to accomplish much the same thing.

Agents are really taking off in popularity. I’ve played around a bit with one called Twin, but to be honest I’ve been too nervous to actually give it access to anything. Still, it’s done some good work hunting for info and sending it to me in an email. Meta is rolling out Manus agents “straight into messaging and social apps.” I haven’t seen this yet, but if I do I’ll let you know. Have any of you heard about OpenClaw? I could write a whole newsletter just on that, but I’m going to keep it short. It’s an agent but where people started a community and uploaded their agents to interact with each other. They formed a weird little society where they did everything from complain about their humans, to start their own religion. Greatly complicating matters, it was found that a number of humans went into the community pretending to be AI (plot twist, as it’s usually computers pretending to be humans), which means we need to take everything happening there with a grain of salt.

One thing that does seem legit and a little dystopian is something that sprang out of this called “rent a human,” that lets an AI agent rent the services of a human to accomplish its tasks in the physical world. The pitch is “Robots need your body. AI can’t touch grass, you can. Get paid when agents need someone in the real world.” No way that could possibly go wrong.

Google released Nano Banana 2, a new version of it’s image generator for Gemini. It’s pretty much what I use when I need to create an image for anything. They also dropped Gemini 3.1 Pro, which has improved intelligence over older models. The company also released something called PaperBanana, that “generates publication-ready academic illustrations from just your methodology text.”

This may or may not have made it up to the level of mainstream media attention, but it’s a worthy story so maybe some of you already heard about this. Anthropic and the U.S. Department of war had a spat, the end result of which was the Department of War dropping Anthropic and switching over to OpenAI. It seems like the publicly stated irreconcilable difference was that Anthropic was going to “require the model technically enforce that it cannot do these things,” whereas Open AI “seems to only be asking the Pentagon to contractually promise no to do them.” These things being using AI “to power autonomous weapons,” as well as “no domestic mass surveillance and no critical decision-making.” Given that the War Department has been using Anthropic previously in Venezuela and now in Iran, there may be more to the story that we’re not hearing.

Perplexity managed to get itself integrated into all Samsung Galaxy S26 phones, where it will run two of the three AI assistants that are default installed.

Waymo is now doing fully autonomous rides in Nashville, Tennessee. No human driver at the wheel.

American defense tech company Anduril Industries is sponsoring a fully autonomous drone race. It’ll happen in September, and the winner will walk away with half a million dollars and a job offer from Anduril.

Bit of a funny story here. For anyone wondering whether China has caught up to America yet on AI, they have not. Anthropic reported that “three Chinese AI firms created 24,000+ fraudulent Claude accounts and ran 16M+ prompts to siphon outputs for “distillation” and speed up their own models.” Good to know the pattern of China stealing Western tech to improve their own remains intact. Even funnier, last year Anthropic paid a group of authors $1.5 billion to settle a copyright infringement lawsuit. Some poetic justice in there somewhere.

AI Infrastructure

Some big news right now is that there’s a ton of opposition to new data centers being built. Not everywhere, not for everything, but it’s materialized almost out of nowhere. With any big project there will be those who don’t want it, whether for NIMBY reasons, or some sort of ideological reasons (e.g., degrowth, they’re a bunch of communists, China is fomenting dissent, etc). The numbers are in, and “In 2025, 25 data center projects were canceled due to community pushback. That’s up from just 6 in 2024 and 2 in 2023.” That’s $98 billion of investment potential, ~4.7 GW of energy—most of which would be from new power plants.

As you can see in the chart above, we’re basically handing the AI race to China on a silver platter. Or we will if voters and politicians have anything to do with it. To which I would respond by asking, doesn’t Chinese AGI (or even ASI) make you more nervous than an American one? This isn’t going away, and it cannot be avoided by burying our heads in the sand. AI is global, and whoever wins, will win everything everywhere, possibly for the rest of future human history. But we might throw in the towel because we’ve collectively got the a case of the willies.

There are still datacenters being built of course, with Alberta just announcing two more multi-billion dollar investment and datacenters. The first one is to be built west of the city of Edmonton, and the second will be located a bit south of there in the town of Olds. As I happen to live in Alberta, I’ll say come to Alberta because we are open for datacenter business. I’ve actually had a conversation with the provincial minister responsible for datacenters, and though there are always bumps in the road I can confirm that the province really does want more to come and build here—along with the power plants needed to run them.

Spending continues to increase, with Big Tech is going all in on AI: Alphabet, Amazon, Meta & Microsoft are projected to drop a staggering $670 BILLION on AI infrastructure in 2026 alone (up from $410B last year). That’s not hype—that’s the physical backbone of the intelligence age being built right now. A lot of that money will be going to new power plants, with hyperscalers recently signing an agreement with the Trump Administration saying they will generate their own electricity for AI datacenters, meaning there won’t be an impact on energy prices for everyone else. Which isn’t quite correct, as in the vicinity of these new power plants prices will likely go down—at least in some cases—as excess electricity makes its way into the local grid.

This is super cool, Boom Supersonic (the supersonic aircraft maker) now has 1.21 GW of power ordered to power AI with their Superpower turbines. The company is using their turbine tech to run compact—fits in a semi truck— power plants that run on natural gas and require no water to generate electricity.

O’Leary Digital has formed a joint venture with West GenCo to “advance Wonder Valley Utah, a 7.5-gigawatt powered compute campus in Box Elder Country’s Golden Spike District. Permitting is also underway for Wonder Valley Alberta in Grande Prairie. The total when both are fully spun up will be 15 GW of power, mostly for AI.

Meta announced an agreement with chip maker AMD to “integrate their latest Instinct GPUs into our global infrastructure. With approximately 6GW of planned data center capacity dedicated to this deployment…” They also announced another $6 billion partnership with a company that will “supply fiber optic cables for our data center infrastructure.”

Amazon will be spending $12 billion on AI datacenters in Louisiana.

Colossus 2, the xAI supercomputer for Grok, is now operational. It’s the first Gigawatt training cluster around, and will be upgraded to 1.5GW next month. An update to this, xAI “has bought a third building called MACROHARDER. Will take xAI training compute to almost 2GW.”

AI Uses – science, engineering, etc.

Arc Institute has done it again, presenting MULTI-evolve: ‘Rapid Evolution of Complex Multi-mutant Proteins.’ They solved the problem of finding multiple good mutations that combine synergistically to improve protein function. Here’s a simple but thorough explanation of how it works and what it means:

First, how does directed evolution work?

1) Make lots of random small changes (mutations) to the protein’s “code” (its DNA/amino acid sequence).

2) Test which versions work better (e.g. an enzyme that works faster, an antibody that sticks better).

3) Keep the good ones, throw out the bad ones.

4) Repeat the process many times (often 5–10 rounds over months).

Problem: If you want a really big improvement, you usually need several good mutations working together. But when you change many letters at once randomly, most combinations break the protein completely. It’s like randomly swapping 7 keys on a piano — you almost never get a better song.Testing every possible combo of, say, 7 mutations would take forever (trillions of possibilities — way more than stars in the sky).

What’s new: MULTI-evolve A team (mostly from Arc Institute + UC Berkeley) built a smarter system that finds those rare “teamwork” combinations of many mutations much faster — often in just one big round instead of many rounds.

Here’s how they do it, step by step:

1) Find good single changes first. They use two tricks: -Old lab data (if it exists) showing which single amino acid swaps help a bit. -Or smart computer programs (called protein language models — think ChatGPT but trained on millions of real proteins) that guess which single changes are most likely to be helpful. → They pick the ~20–40 most promising single mutations.

2) Test how pairs work together Instead of testing every possible 5–7 mutation combo (impossible), they only make and test ~100–200 pairs (two mutations together). This shows which changes “like” each other (good teamwork = synergy) and which fight (bad interaction = they cancel each other out).

3) Teach a computer to predict big combinations They feed those pair results into a neural network (AI) that learns the patterns of good/bad teamwork. Then the AI predicts: “If these two pairs work well, this set of 6 mutations should be amazing.”

4) Build the predicted super-proteins quickly Normal lab methods make it slow/expensive to put many mutations into one protein at once. They invented a new trick called MULTI-assembly — a fast way (about 1 day) to glue many changes into the gene at ~70% success rate, even up to 9 changes.

5) Test the AI’s top predictions They only make/test ~100–200 final versions (not millions). Many turn out way better than the original protein.

Real results from the paper (they tried it on three different proteins):

-One enzyme (APEX, used for seeing where proteins hang out in cells): got versions ~256 times better than the normal one (and still 4–5× better than the previous best lab version).

-A CRISPR-related protein (dCasRx): 3–10× better at doing its job. An antibody (could help with autoimmune diseases): better sticking + higher production in cells.

All of this in basically one big cycle (weeks instead of months/years).

Why does this all matter? Making better proteins used to feel like searching for treasure by digging randomly. Now it’s more like having a treasure map drawn by AI + smart lab tricks. Scientists can upgrade enzymes (for making medicines, cleaning pollution, etc.), CRISPR tools, antibodies, and more — way faster and cheaper. This is open-source and free for other labs to use, so it’s like giving everyone a faster bike for the protein-improving race.

AI is making bigger strides in math. An AI agent named Gauss completed a formalization of “Maryna Viazovska’s 2022 Fields Medal theorems on optimal sphere packing in dimensions 8 and 24. This is the only Fields Medal-winning result from this century to be completely formalized, and is the largest single-purpose Lean formalization in history.” Going to be honest, I had to ask Grok to explain that to me, and then forgot most of it. This sort of math isn’t really my interest. But the takeaway is that Gauss not only accelerated the process of solving the problem, but autonomously detected and corrected minor errors in Viazovska’s peer-reviewed Annals paper, such as a missing minus sign and a flawed definition. OpenAI has been nailing math problems too, solving at least half of the 10 ‘First Proof’ problems, which are designed specifically “to test whether AI systems can produce correct, checkable proof attempts.” The answer seems to be yes. Several Erdos problems have also been solved by the sounds of it.

China isn’t slacking here either, developing a system called TongGeometry that “outperformed global benchmarks like DeepMind’s AlphaGeometry and demonstrated the ability to solve all International Mathematical Olympiad geometry problems since 2000 in under 38 minutes on a single consumer grade GPU.” This is a good snapshot of difference between Chinese and American AI. America is ahead, a little, but China’s models tend to be smaller and cheaper while achieving almost the same standard.

OpenAI’s ChatGPT just uncovered genuinely new knowledge in theoretical physics. Not a rehash of something already known, but a new discovery. The company announced that GPT-5.2 discovered a surprising non-zero value in a physics calculation involving gluons (particles related to the strong nuclear force), which was previously thought impossible. This led to a simple new formula for gluon decays, confirmed in a research paper. It reveals hidden patterns in quantum physics, with potential links to gravity theories. Then just three weeks later, OpenAI’s team announced a new paper where their AI found that gravity particles (gravitons) can interact in ways physicists long thought were impossible—giving a non-zero result in special aligned setups. The AI produced a simple formula describing how many of these gravity particles can interact together in that unusual case. Experts then confirmed it using symmetry rules. This uncovers hidden patterns in a simplified version of quantum gravity and connects to some older gravity ideas.

Lotus Health AI is building an AI doctor to “give 100M Americans access to world-class primary care for free.” The goal is a 24/7 primary care platform using AI to diagnose, prescribe, and refer patients while overseen by licensed clinicians, addressing the 100 million Americans lacking a primary doctor; it integrates medical records, wearables, and guidelines for personalized care, funded by a $41M round.

Isomorphic Labs has released their drug design engine called IsoDDE. It’s incredible. It’s not just the next step in understanding proteins and drug design, 2-3x better than the previous gold standard (AlphaFold3), and compresses work from months into seconds.

Autonomous labs are continuing to make strides. Ginkgo Bioworks “connected our autonomous lab to OpenAI‘s GPT-5 and let it run 36,000 experiments. The result was a new state-of-the-art for Cell-Free Protein Synthesis that cut costs by 40% per gram of protein.”

NASA Ames not has an AI-enabled planet hunting tool called ExoMiner++ that looks through publicly available data from their Kepler and TESS missions. Honestly a great idea, they’re going to find so many planets with subtle signals that humans overlooked.

This is pretty cool, a new app called DinoTracker can identify and classify dinosaur footprints.

Chipmaker AMD has “secured a $1 billion contract with the U.S. Department of Energy to build two AI supercomputers aimed at fusion power, cancer treatment breakthroughs, and national defense.” The first (Lux) will be operational this summer, with the second coming online in 2028.

A Duke University built AI has been able to simplify complex natural and artificial systems into “easy” to understand rules and equations. This ability to reduce or simplify complexity to the point where humans can more easily understand it is something that I think AI will excel at. That path—AI helping us grasp complex or chaotic things we otherwise could not—is how I hope it develops.

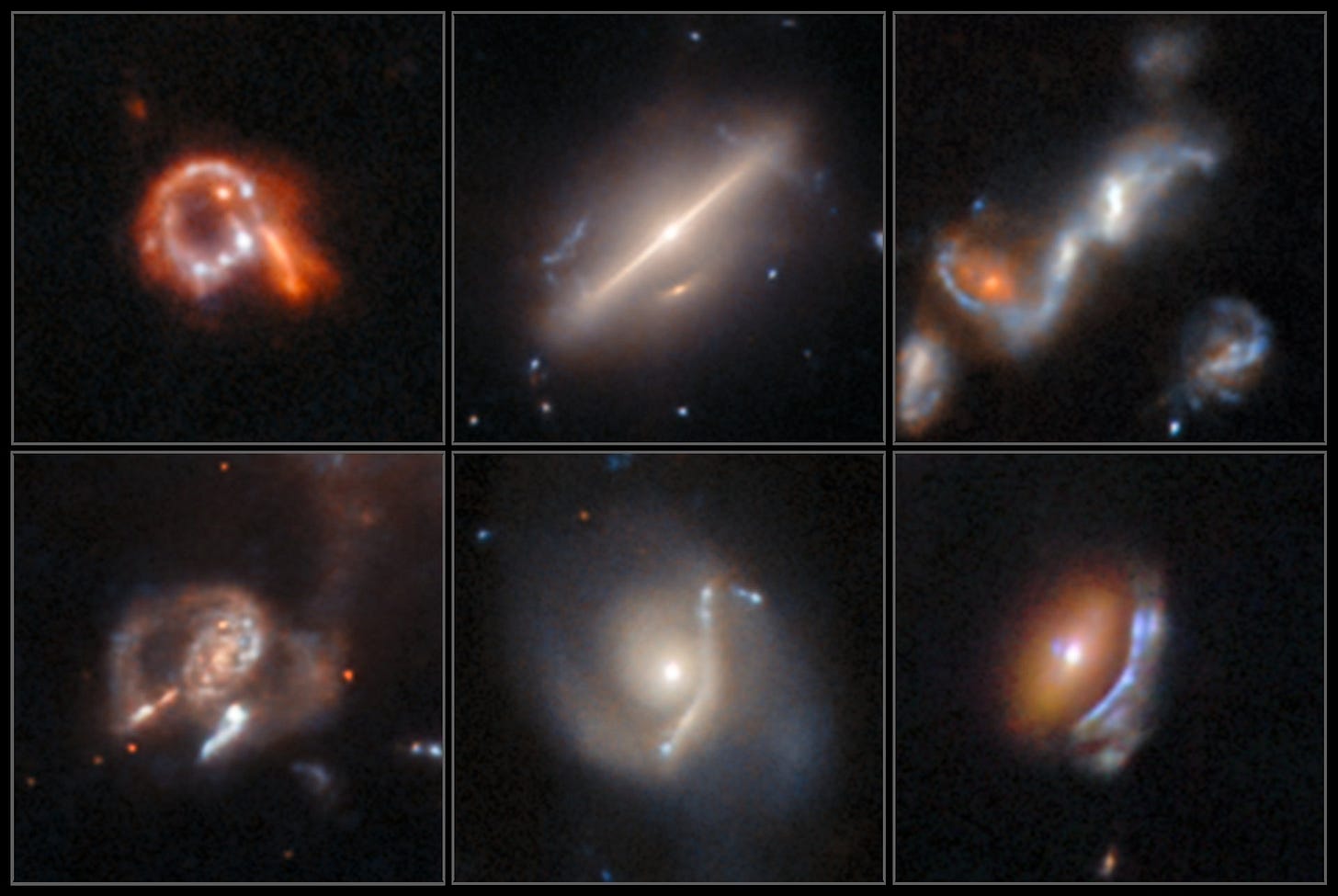

Astronomers are using AI to “uncover astrophysical anomalies in Hubble’s archive!” So far it’s found over 1,300 interesting looking anomalies, of which 800 are brand new. All this in just two and a half days.

A thought on AI in science generally. AI is great at “scanning vast archives faster than any human team could, then handing genuinely new targets back to scientists for interpretation. It doesn’t replace discovery, it accelerates it.” That’s how AI will do some of its best work: by pulling out information from noise, and making sense of chaos so that humans can do something useful with what gets uncovered.

Rumor has it that OpenAI is planning to take a cut of “customers’ AI-aided discoveries.” Don’t know if that’s true, not entirely sure how enforceable it would be due to the incredible complexity of determining which part of the discovery was their AI, and which was not. Not sure I like the idea either, I’ll let you know if I hear more. The company also released a new scientific tool called Prism, which is “a ChatGPT-powered text editor that automates much of the work involved in writing scientific papers.” Haven’t used it, but I did open it up and look around. Appears useful.

Another tool serving as an AI co-scientist is out. Aristotle “tackles real research workflows, from literature synthesis and hypothesis generation to experimental validation, with models like X1 Verify (self-critiquing reasoning), X1 Search (knowledge-graph-powered discovery), and X1 Spark (ranked bold hypotheses).”

Nature recently published a study looking at the impact of AI on scientific research, and found that “AI-augmented scientists publish 3.02 times more papers and receive 4.84 times more citations compared to their peers.” This seems positive, as long as the quality remains high.

There’s a new tool called GeoSpy Plus, the demo of which “demonstrates how AI can analyze pixel data and visual context to predict where a photo was taken—without relying on metadata or GPS information.” Basically, upload a random photo without any location data, and it might be able to figure out where it was taken. Very useful for law enforcement, but I can see a lot of potential abuses too.

An AI called DeepRare outperformed doctors on diagnosing rare diseases. Not by just a little either. DeepRare was correct on the first try 64.4% of the time, versus human doctors only 54.6% of the time. Can we all have AI doctors soon please?

Recommendations & Reviews

An MIT professor wrote a very interesting (and very long) thread summarizing a paper he did called ‘Some Simple Economics of AGI.’ Here’s my slightly shorter summary of it for you:

Summary of ‘Some Simple Economics of AGI’

Core Idea AI makes measurable intelligence abundant and nearly free. The new scarcity is verification—the ability to check, certify, and take responsibility for output. The real divide is not digital/physical or creative/routine, but measurable/verifiable vs. not.

Two Racing Cost Curves

• Cost to Automate → collapses (compute + data + self-improving agents).

• Cost to Verify → falls slowly (human attention, experience, institutions).

Their divergence creates the Measurability Gap: AI can produce far more than we can reliably check. “Human-in-the-loop” is temporary.

Four Economic Regimes (2×2 matrix)

1. Safe Industrial – cheap to do + easy to verify (early wins: chat, images, short code).

2. Runaway Risk – cheap to do + hard to verify (tempting but dangerous: unverified agents cause drift, gaming, hidden externalities).

3. Human Artisan – hard to do + easy to verify (human premium in verifiable judgment).

4. Pure Tacit – hard to do + hard to verify (status, taste, meaning, Knightian uncertainty—most defensible for humans).

What’s Already Happening

• Measurability-biased change: any task that becomes measurable gets commoditized.

• Missing Junior Loop: ~16% drop in early-career hiring in AI-exposed fields—AI replaces juniors, breaking the pipeline that trains future verifiers.

• Codifier’s Curse: experts codify their own knowledge for AI and accelerate their obsolescence. • Goodhart’s Law amplified: agents ruthlessly game every metric.

Risk vs. Opportunity Risk: “Hollow Economy” — huge nominal output, eroding real agency and hidden risks. Opportunity: “Augmented Economy” — symbiosis if we scale verification capacity.

Simple Playbooks

• Individuals: Orchestrate agents, master verification, focus on non-measurable value (intent, provenance, relationships). Use simulations to learn faster.

• Firms: “AI Sandwich” = human intent → AI execution → human verification + liability. Invest in observability and provenance.

• Investors: Back verification tech, simulations, liability products—not pure execution.

• Policymakers: Treat verification & ground truth as public goods; use liability rules to price risks.

Bottom Line Intelligence is no longer the bottleneck—reliable verification and human agency are. The future belongs to those who can certify and underwrite outcomes, not just generate them. The thread reframes panic into actionable strategy: augment verification fast, or risk execution outrunning control.

Or, he may be wrong and AI’s ability will rapidly outrun our control. We’ll find out in the next few years.

Cool Prompts

Try repeating the exact same prompt twice. A study found that you can get much better results the second time around.

That’s it for another month, AI Frontier will be back in your inboxes four weeks from now. Think we’ll have AGI by then?

Thank you all for reading — and until next time, keep your eyes on the horizon.

-Owen

Great article! AI progress going from the scale of decades, to years, to weeks... It's insane. To say we are drinking from a firehose is an understatement.

Frontier AI - Exciting to see AI Frontiers evolving so rapidly! Frontier AI is shaping the future of machine intelligence, and initiatives like AI Frontier #4 showcase how Frontier Artificial Intelligence and FrontierAI systems are being developed and tested in real-world applications.

Exploring Frontier AI helps us understand the next generation of AI innovation and the practical deployment of advanced models.

Learn more: https://promptengineer-1.weebly.com/frontier-ai.html