AI Updates #1

Company news, AI infrastructure, AI makes scientific discoveries.

As promised, here is AI Updates #1, where I try my level best to keep you informed about what’s going on in the world of AI as it surges forward at a dizzying pace.

This should have been out yesterday. My apologies for that, the day sort of got away from me. But here it is now for a bit of light weekend reading. This edition just contains what I already had ready to go for last week’s Techno-Optimist, so it’s missing some recent updates (Grokipedia!). Not to worry, the next one will be more complete.

Hope you all enjoy, let me know what you think in terms of format (e.g., breaking it down into sections?), which I may alter somewhat over the next few issues until I settle on a format that works best.

Alright, hang on tight as we accelerate up the curve!

“Humans are not perfect, and neither is AI. But together, we can create something extraordinary.” — Andrew Ng

OpenAI’s ChatGPT now has something called ‘Instant Checkout,’ which is exactly what it sounds like, allowing users to shop within the app. At the moment it’s partnered with Etsy and Shopify, but I’m sure that will expand in short order.

The company also launched a new app called Sora, which from the sounds of it is an enhanced video editing / generation platform. CEO Sam Altman wrote a blog about it (or had ChatGPT write it) here. “Inside the app, you can create, remix, and bring yourself or your friends into the scene through cameos—all within a customizable feed designed just for Sora videos.”

In what can only be described as closing the barn door after the horse has bolted, there will now be parental controls in ChatGPT. LOL, I wonder how long it will take teens to get around those?

Anthropic recently introduced their latest model: Claude Sonnet 4.5, “the best coding model in the world.” According to the company, it’s the strongest model for building complex agents. It’s the best model at using computers. And it shows substantial gains on tests of reasoning and math.”

One very interesting thing that sort of flew under the radar is that Claude Sonnet 4.5 managed to do 30+ hours of autonomous coding, greatly surpassing expectations. We’re well on the way to AI agents that really do operate more or less independently for indefinite periods of time.

Google DeepMind rolled out their latest video generation model: Veo 3.1, which has a “deeper understanding of the narrative you want to tell, capturing textures that look and feel even more real, and improved image-to-video capabilities.” Veo can be accessed in Google’s Gemini App for anyone who’s curious.

To say that there’s a lot happening in AI infrastructure is a bit of an understatement, with major companies seemingly announcing new megaprojects every other week. Andrew Chien, a computer scientist at the University of Chicago, said that, “I’ve been a computer scientist for 40 years, and for most of that time computing was the tiniest part of our economy’s power use. Now, it’s becoming a large share of what the whole economy consumes.”

Elon Musk has said that just as his company xAI “was the first to build 1GW of coherent training compute, they will also be the first to reach 10GW, 100GW, and 1TW.”

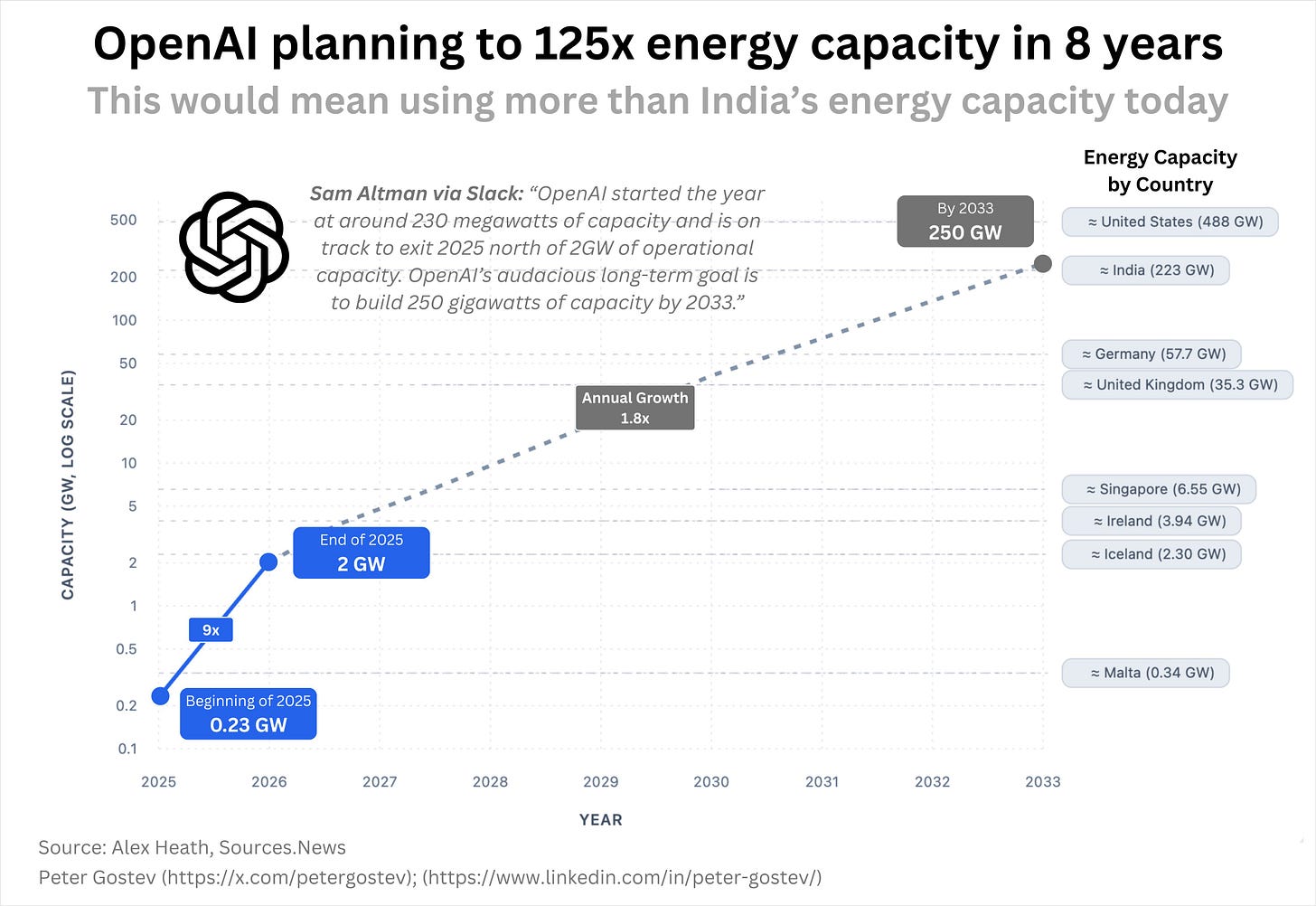

In another blog, Sam Altman said the vision at OpenAI is “to create a factory that can produce a gigawatt of new AI infrastructure every week.” Easier said than done of course, but wow the acceleration is real. According to an internal memo, OpenAI has 9x’d their capacity this year, and wants to do another 125x by 2033. Something like 250GW would be needed, equivalent to 250 large nuclear power plants.

OpenAI and chip maker AMD have signed an agreement to “deploy 6 gigawatts of AMD GPUs over multiple years.” They also announced a plan with Nvidia “to build AI data centers consuming up to 10 gigawatts of power, with additional projects totaling 17 gigawatts already in motion.” In an entirely separate announcement, OpenAI is partnering with Broadcom (semiconductor manufacturer) “to deploy 10GW of chips designed by OpenAI.” So that’s 26GW worth of new announcements within a few weeks, just by OpenAI.

Google has decided to spend some big bucks in India, $15 billion “to establish our first full-atack AI Hub in India.” The money will be spent over the next 5 years to set up the 1GW data center and AI Hub. The company also announced $9 Billion for South Carolina, to be spent through 2027, for expanding their “Berkeley County data center campus and support the continued construction of two new sites in Dorchester County, strengthening the state’s role as a critical hub for American infrastructure.”

Meta (the parent company of Facebook) announced a small (relatively speaking) “state-of-the-art data center in El Paso, Texas, that will have the ability to scale to 1GW.” It’s all part of their plan to build towards superintelligence.

I saw an estimate that by 2035, AI data centers could be using 1,600 TWh a year. By my rough calculation, that would require 182GW of capacity. I think that may be a serious underestimate.

What will all that compute be used for? Photo and video generation for sure. Definitely writing billions of emails, reports, and memo’s. Powering robots as they take our jobs? Possibly. But one incredible thing they’ll be doing is making our medical care better, and rapidly accelerating scientific discoveries.

A new study showed that when multiple AIs work together, this “AI council” aced the US medical licensing exams, scoring 97% and outperforming any individual AI.

AI also passed the CFA Level III, the world’s hardest finance exam. Scoring 79% (most humans fail).

A Google AI called C2SD-Scale 27B (creative, I know) managed to “generated a novel hypothesis about cancer cellular behavior, which scientists experimentally validated in living cells.” Essentially what this means is that the model “demonstrated fluency in the language of human biology. Listening to what cells were saying, understanding their context, and talking back with a new idea that nature validated.”

Another group working in California used AI to map 1,300 regions of the mouse brain. Here’s the kicker: some were previously uncharted, and have now been discovered thanks to the work this AI-human team has done.

It’s not just in biology either, a theoretical physicist used GPT-5 to rediscover the results of some work he’d done on black holes, completely independently and without knowing the correct result beforehand.

It’s early days still, but I expect we’ll start seeing some really incredible results in the next year or so, the number and usefulness of which will grow exponentially thereafter.

That’s all for now, AI Updates will be back four weeks from now. Let me know what you thought!

Thank you all for reading — and until next time, keep your eyes on the horizon.

-Owen

Great roundup! Not too long and very digestible which is great in such a fast moving, complex field.

The 26GW worth of capacity announcements from OpenAI alone within just weeks really underscores how the bottleneck has shifted from compute availability to power infrastructure. What's particularly compelling about the Broadcom partnership is that OpenAI is designing their own chips, which signals a longer term strategy beyond just purchasing GPUs from Nvidia or AMD. The fact that AI is already making novel hypotheses about cancer cellular behavior and independently rediscovering black hole physics shows we're past the point of just pattern matching. The exponential curve you highlighted isn't just about more compute, it's about compute being used to discover fundamentals that unlock even faster progress in science and enginering.