AI Updates #2

The latest company news, AI infrastructure, and progress with AI towards scientific discoveries

Welcome everyone to AI Updates #2, where my mission remains distilling the firehose of AI news into something you can actually read before the next breakthrough drops.

If you look below to today’s quote, I actually disagree with Edsger in that I find the question very interesting indeed. Though I think the analogy he makes is a good one. Can a submarine swim? Arguably no, not if we look at what life does. But the end result is mostly the same. Ditto for artificial intelligence it would seem. Whether or not it’s actually thinking, the end result certainly looks increasingly like it is thinking — or something like it anyways.

Alright, hang on tight as we accelerate up the curve!

“The question of whether a computer can think is no more interesting than the question of whether a submarine can swim.”

—Edsger W. Dijkstra

Model updates and news

Elon Musk tasked xAI to come up with a better version of Wikipedia — which is insanely biased on anything even remotely political or with a “culture war” feel. The result? Grokipedia. Even the 0.1 version was pretty decent, by all accounts being very neutral and even-handed on all topics. It’s now on version 0.2, letting anyone propose edits to topics. The plan (cannot get much more epic than this) is to rename Grokipedia to the Encyclopedia Galactica once it’s fully mature. According to Elon Musk, “This is intended to be a massive open source repository of all knowledge about the Universe. Many copies will be distributed periodically throughout the solar system to preserve knowledge for future civilizations should ours perish or subside into barbarism. We take the lesson of the Library of Alexandria to heart.” xAI is hiring people to work on Grokipedia, so join up and “Help build the galaxy mind version of the Library of Alexandria and scatter copies throughout space and other planets and moons!” This time, the knowledge won’t be lost.

Jeff Bezos has jumped into the AI ring, creating a new startup called Project Prometheus where he will be the Co-CEO. It’s got $6.2B in startup funding from Bezos, which is definitely buy-in level money. More info in this thread.

Grok 4.1 is out, and includes improvements to “Model personality and quality, low latency, reliability up and down the stack.” From what I’ve seen, it was a noticeable improvement.

Google has announced that Veo is “getting new precision editing capabilities that let you easily add or remove elements from a scene - all while preserving the integrity of your original video.” Think adding glasses to someone in a video, or a cat on one’s shoulder, or a wig.

Google’s Nano Banana Pro image creator is out with some improvements. Pretty cool if you ask me. Here’s a tutorial on how to use it. Sounds like it’s pretty good at diagrams and graphics.

Google has also launched Gemini 3, “the best model in the world for multimodal understanding, and our most powerful agentic + vibe coding model yet. Gemini 3 can bring any idea to life, quickly grasping context and intent so you can get what you need with less prompting.” Apparently it really is quite good.

OpenAI has launched a new browser, ChatGPT Atlas. Currently just available on macOS, it’s looking to compete with Google as a search engine. They’ve also upgraded ChatGPT to 5.1, which includes “improvements in instruction following, and adaptive thinking. The intelligence and style improvements are good too.”

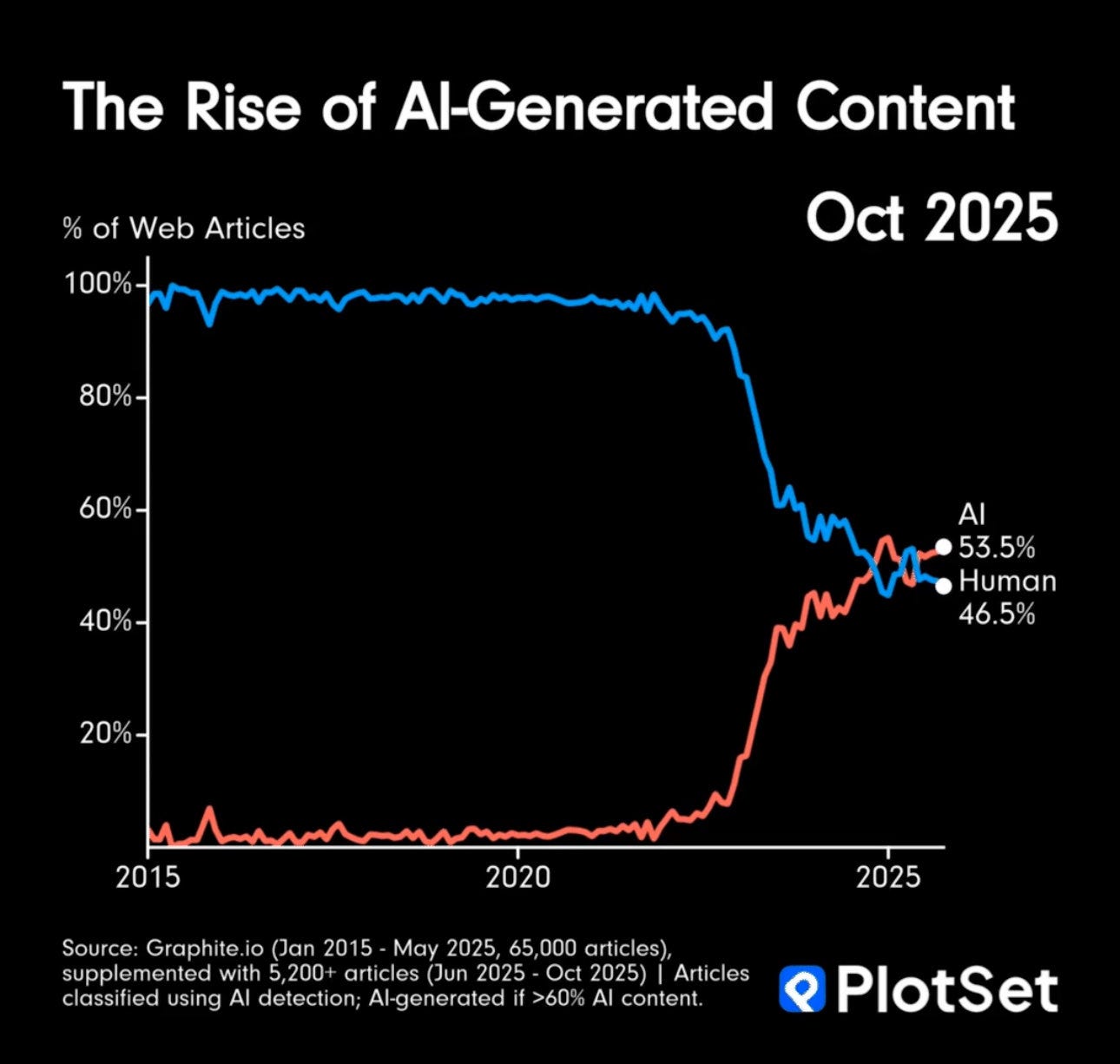

Not sure this rise of AI-generated content is a net positive yet, given the amount of “AI slop” out there. Counterpoint: there was and continues to be plenty of human created slop out there, so join in at the trough I guess? Eat up, it’s not that bad. Another way of looking at it is that AI has suddenly become good enough to seriously compete with average content written by humans. Not bad for what’s essentially a thinking rock.

Sounds like Meta will start using some chips developed by Google to run it’s AI. NVIDIA isn’t thrilled, but says that’s it’s “a generation ahead of the industry.”

Anthropic has dealt with a “highly sophisticated AI-led espionage campaign,” aimed at “large tech companies, financial institutions, chemical manufacturing companies, and government agencies.” Apparently it was China (is anyone surprised?). Moral of the story is that the best defense against a bad guy with AI is a good guy with a bigger, better AI.

A new AI chip maker has entered the ring. Qualcomm is taking on the heavyweights of NVIDIA and AMD. Good on them, let’s see more competition!

Qualcomm isn’t the only new AI chip maker. Startup Extropic has released their alien looking chip, which is designed to use far less energy than conventional chips.

One more chip to tell you about. Tesla has a new one called AI5, that’s a big step up from their previous chips (did not realize they were even doing that until just now). Production will start next year.

AI Infrastructure

NVIDIA wants to build datacenters in space, announcing last month a partnership with a company called Starcloud to eventually build massive AI infrastructure off world. The idea is to build a 5GW datacenter in orbit, with “super-large solar and cooling panels approximately 4 kilometers in width and length.” There’s a good writeup on it here from Ars Technica, and another here by Peter Hague. This is already being tested, with NVIDIA having launched one of their H100 GPUs into orbit, where it will run an open source version of Gemini.

Google is jumping onto the datacenters in space bandwagon too, planning to launch their Project Suncatcher test of two satellites in early 2027.

Did I mention that SpaceX is getting in on this too? They say that the version 3 of their Starlink satellites could be networked into a massive, distributed orbital datacente.r

Meta is moving forward to build their Hyperion Data Center, which will cost roughly $27 billion and eventually have 5GW of compute. They’re partnering with a fund managed by Blue Owl Capital to get it going.

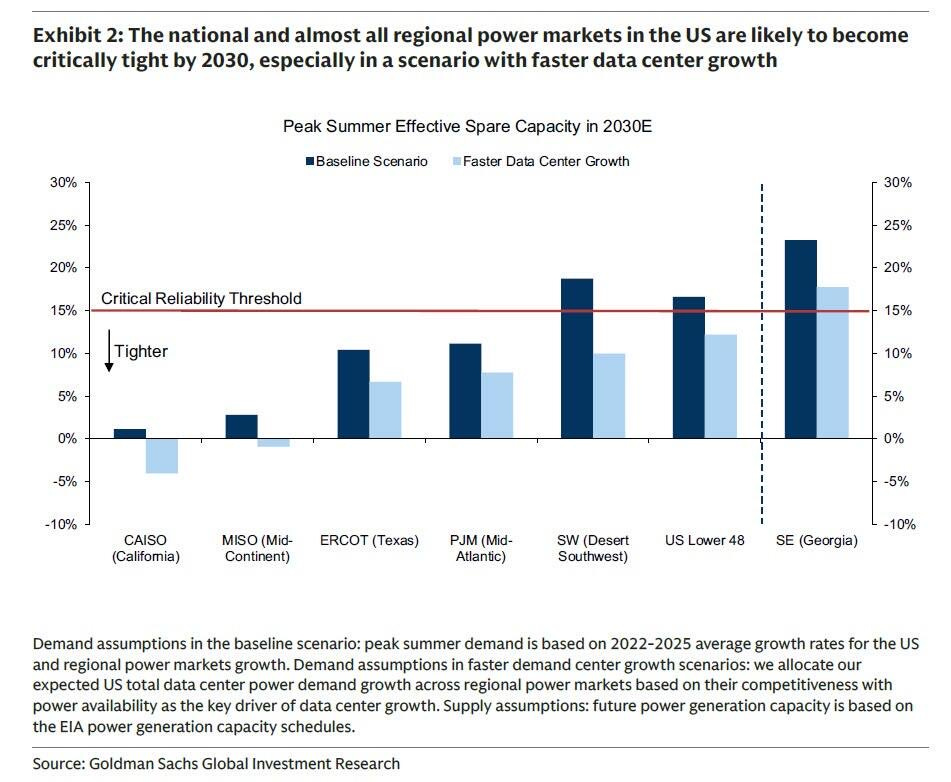

Power is going to be the limiting factor for the speed at which new AI datacenters are built. Spare capacity is shrinking fast across the United States. Not just the U.S. either, Alberta has awarded the last 1.2GW of it’s spare grid capacity for datacenters to two projects. The remaining 37 projects in the que with 19.4GW of demand will either need to wait—likely for years while new power plants are built—or build their own.

Google just dropped a cool $40B investment into Texas for AI and cloud infrastructure. This will all be spent in just the next 2 years.

Speaking of massive investments, Anthropic decided to top that by announcing a $50B investment to build datacenters in Texas and New York.

Brookfield isn’t skimping out either, with a $100B “global AI infrastructure program -backed by NVIDIA and the Kuwait Investment Authority - to build out the full AI value chain from power and land to data centers and compute.”

Amazon Web Services just brought online their Project Rainier, “one of the world’s largest AI compute clusters.” Microsoft just announced a new datacenter in Atlanta called Fairwater.

One last quick note. There’s a lot of noise on how much water datacenters use. Turns out, not that much.

AI uses – science, engineering, etc.

The U.S. Department of Energy has launched the Genesis Mission. A “Manhattan Project” to double American science and engineering productivity within a decade using artificial intelligence. The mission will integrate the nation’s 17 National Laboratories, connecting the world’s best supercomputers, AI systems, next-generation quantum computers, and advanced scientific instruments into what Energy Secretary Chris Wright calls “the world’s most complex and powerful scientific instrument ever built.” The platform will mobilize roughly 40,000 DOE scientists and engineers alongside private sector innovators to tackle three priorities: American energy dominance through accelerated advanced nuclear and fusion development, discovery science powered by quantum computing, and national security through AI-enhanced defense materials and nuclear stockpile maintenance. The initiative builds on Trump’s earlier directive to remove barriers to AI innovation and reduce dependence on foreign adversaries in the technology race. Now we’re cooking!

Interesting, apparently those with “higher intelligence generally show lower glucose metabolism during task performance, suggesting they require less energy to achieve the same or better results, and their neuronal computation is more efficient.” Could this translate to AI somehow? Maybe as it gets smarter it’s energy use per “thought” will improve.

We’re so early in terms of how AI will impact everything. A new AI model “trained to use computational tools might accelerate mathematical discovery.” How many unsolved mathematical problems will be solved in the next decade? My bet is a non-zero number. Take for example this Chinese model called AI-Newton, capable of automatously “discovering” known physics principles like Newton’s second law after “being fed experimental data.”

There’s a new AI Scientist called Kosmos, created by Edison Scientific. It’s estimated to do “6 months of work in a single day. One run can read 1,500 papers and write 42,000 lines of code. At least 79% of its findings are reproducible.”

That’s it for this edition; AI Updates will be back in your inboxes four weeks from now. Thank you all for reading — and until next time, keep your eyes on the horizon.

-Owen

Love this!

You lost me with what I had hoped was a misplaced pronoun reference.... "Elon Musk tasked xAI to come up with a better version of Wikipedia — which is insanely biased on anything even remotely political or with a “culture war” feel."